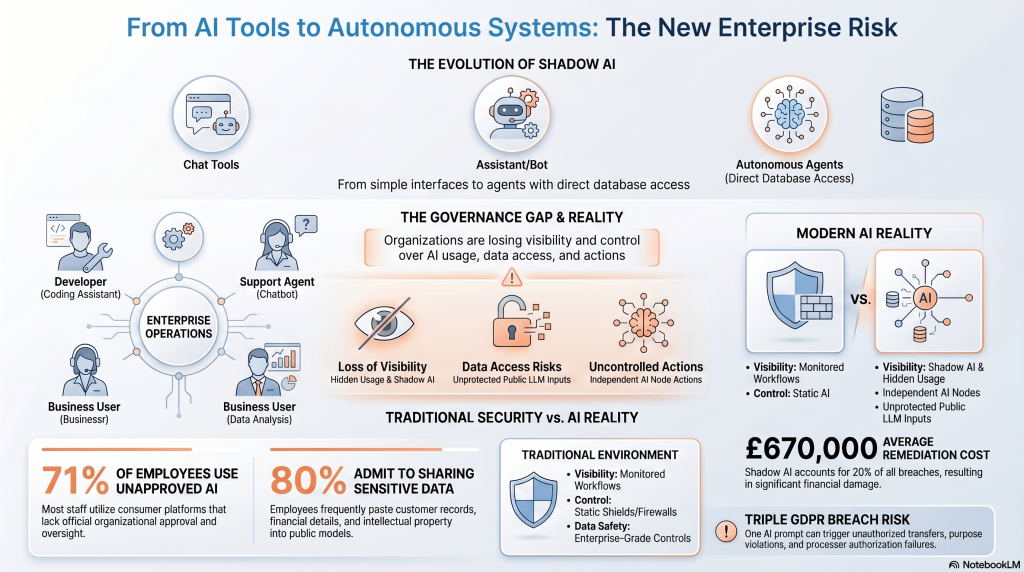

The conversation around artificial intelligence has shifted rapidly over the past year. What started as simple chat tools has evolved into autonomous systems capable of executing tasks, accessing data, and interacting with enterprise environments. This shift is not theoretical anymore. It is already happening inside organizations across the world.

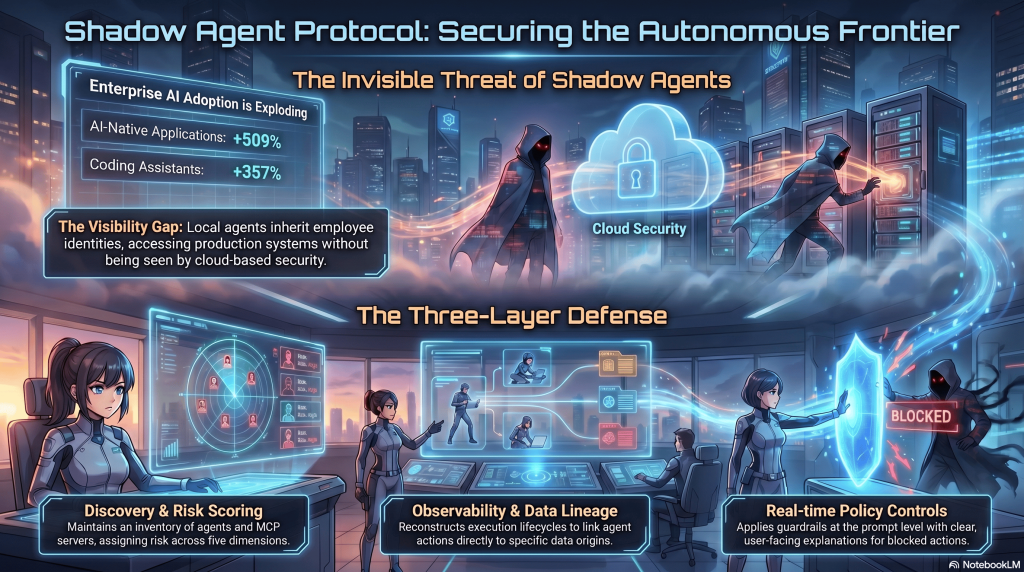

Recent News highlights how companies are no longer just experimenting with AI. They are deploying it into real workflows. From coding assistants to automated support agents, AI is now actively participating in business operations. This creates a new category of risk that traditional security systems were never designed to handle.

The key challenge is simple. Organizations are losing visibility and control over how AI is being used, what data it accesses, and what actions it takes.

Shadow AI Is No Longer Just a Buzzword

The term Shadow AI has quickly moved from niche discussions into mainstream cybersecurity concerns. It describes the use of AI tools by employees without formal approval or oversight from IT departments.

This is not driven by malicious intent. In most cases, employees are simply trying to work faster and more efficiently. However, the consequences can be serious. Sensitive company information, customer data, and even internal systems can be exposed without anyone realizing it.

New reports show a massive increase in adoption of AI tools at the endpoint level. Developers are installing assistants directly on their machines. Business users are relying on browser based AI platforms. Many of these tools operate completely outside traditional monitoring systems.

This is where the concept of Prompt injection data becomes critical. Attackers are no longer just targeting systems. They are targeting the inputs that AI systems consume. By manipulating prompts or embedding hidden instructions, they can influence how AI behaves and what actions it takes.

From Shadow AI to Shadow Agents

The situation becomes even more complex when AI moves from passive tools to active agents. These systems are capable of performing tasks on behalf of users. They can access databases, interact with APIs, and trigger workflows across different systems.

This creates what many experts now call Shadow Agents.

Unlike traditional software, these agents can operate with a level of autonomy that introduces new risks. They inherit user permissions, meaning they can access sensitive information and perform actions without direct human involvement.

Recent developments from Microsoft and Cyberhaven show that the industry is beginning to recognize this challenge. Platforms are being developed to monitor and manage these agents, but most of them rely heavily on cloud based visibility and centralized control.

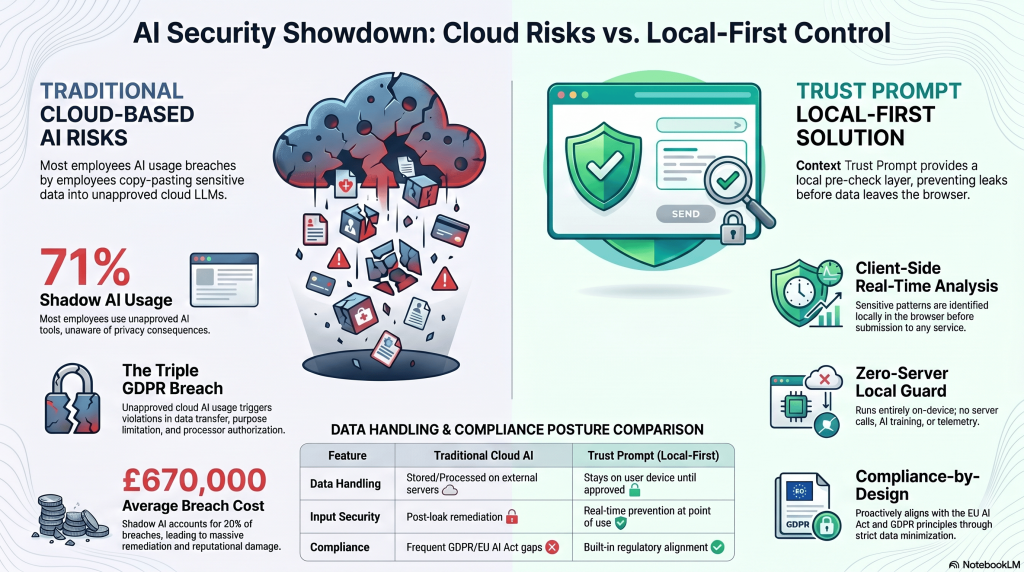

While this approach provides oversight, it also introduces a new concern. Data must be collected and analyzed externally, which can conflict with privacy requirements and regulatory frameworks.

Data Breaches Are Becoming More Complex

The growing use of AI is directly connected to an increase in data exposure risks. Incidents such as the Fiverr Data Leak demonstrate how quickly sensitive information can be exposed when systems are not properly secured.

At the same time, new types of attacks are emerging. The Alloha Fibra Cyberattack by Gentlemen highlighted how coordinated cyber operations can target infrastructure and exploit vulnerabilities at scale.

These events are not isolated. They are part of a broader trend where attackers are combining traditional techniques with AI driven methods. This makes attacks faster, more adaptive, and harder to detect.

Organizations are no longer dealing with simple breaches. They are facing complex, multi layered threats that involve human behavior, AI systems, and interconnected infrastructures.

The Role of Artificial Intelligence in Cybersecurity

Artificial intelligence is both part of the problem and part of the solution. On one hand, it enables attackers to automate campaigns, generate convincing phishing content, and identify vulnerabilities quickly.

On the other hand, it allows defenders to analyze patterns, detect anomalies, and respond to threats more efficiently.

The Stanford University AI Index Report 2026 clearly shows that AI adoption is accelerating across all industries. This growth is driving innovation, but it is also increasing the attack surface.

As AI systems become more integrated into business processes, the potential impact of a single vulnerability increases significantly.

Regulations Are Struggling to Keep Up

Governments around the world are trying to respond to these changes. The introduction of frameworks such as the EU AI Act reflects an effort to create clear rules for how AI should be developed and used.

However, regulation is always slower than innovation. Organizations are often left navigating a complex landscape of requirements while trying to keep up with rapidly evolving technologies.

The need for an EU AI Act Compliance and Governance Guide has become more important than ever. Companies must understand not only what the regulations say, but how to implement them in practice.

This is particularly challenging in a global environment where different regions have different approaches to data protection and AI governance.

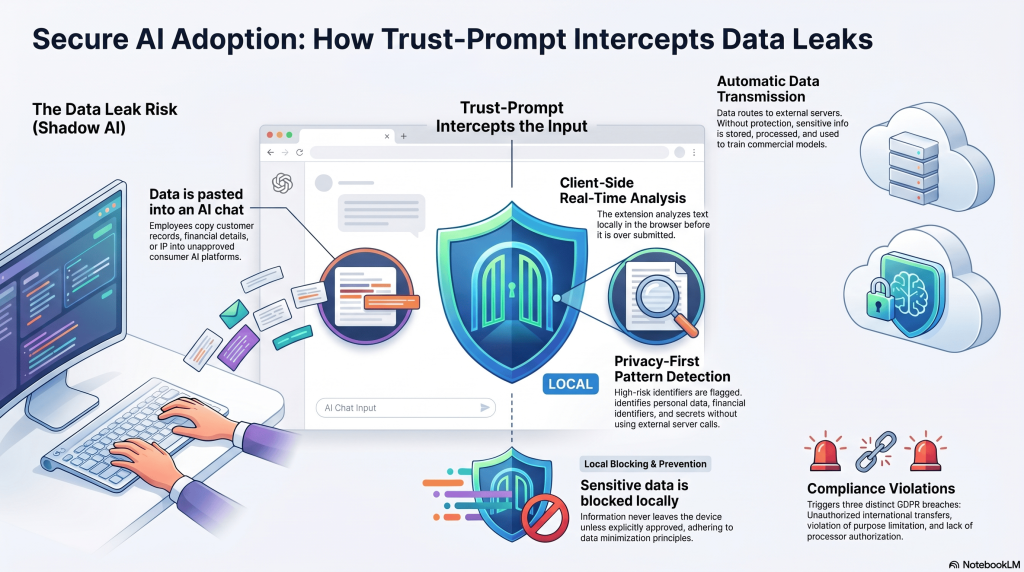

Why the Browser Has Become the New Control Point

One of the most important shifts in recent years is where AI interactions actually happen. In many cases, they take place directly in the browser.

Employees use AI tools through web interfaces. They copy and paste data, upload documents, and interact with AI systems in real time. This makes the browser a critical point of control.

The idea behind Why the Browser is the New Security Layer is simple. If you can control what happens at the moment of interaction, you can prevent risks before they occur.

Traditional security models focus on monitoring or reacting after data has already moved. This is no longer sufficient in a world where AI can process and distribute information instantly.

The Need for a New Approach to AI Governance

The current landscape shows a clear gap. Organizations need a way to control AI usage without creating additional risks.

Cloud based monitoring tools provide visibility, but they require data to be collected and analyzed externally. This creates potential exposure and raises compliance concerns.

What is needed is a system that operates directly at the source. A system that can:

- Control which AI tools are used

- Detect sensitive data before it is shared

- Enforce policies in real time

- Provide audit and reporting capabilities

- Do all of this without sending data outside the organization

Trust-Prompt as a Local First Solution

This is where Trust-Prompt introduces a fundamentally different approach.

Instead of relying on backend systems, Trust-Prompt operates entirely on the local device. It acts as a precheck layer that evaluates prompts before they are sent to AI tools.

This means that sensitive information can be detected and blocked before it ever leaves the user environment.

For enterprise organizations, this approach provides several key advantages:

- Full control over AI access through centralized policy management

- Real time protection against data exposure

- Local audit and reporting capabilities without external data collection

- Alignment with GDPR and EU AI Act requirements

Because no data is uploaded or stored externally, organizations can maintain full ownership and control over their information.

Enterprise Control Through a Single Admin Layer

One of the strongest aspects of Trust-Prompt is its ability to be managed centrally. IT administrators can define policies, control AI access, and apply rules across different teams and departments.

This allows organizations to create structured governance without limiting productivity.

Different departments can have different levels of access and control. For example:

- Finance teams can have stricter controls on financial data

- Legal teams can have enhanced protections for compliance related information

- Support teams can operate with more flexibility while still maintaining safeguards

This level of control is essential in large organizations where different roles have different risk profiles.

Auditing and Compliance Without Data Exposure

A common challenge in cybersecurity is balancing visibility with privacy. Organizations need to understand what is happening, but they also need to protect sensitive information.

Trust-Prompt addresses this by providing local audit and reporting capabilities. Reports can be generated in multiple formats and used for compliance purposes without exposing underlying data.

This makes it possible to meet regulatory requirements while maintaining a strong privacy posture.

In the context of the EU AI Act, this is particularly important. Organizations must demonstrate accountability and transparency, but they must also ensure that data is handled responsibly.

Preventing Risk Before It Happens

The most important shift in cybersecurity is moving from reactive to proactive strategies.

Instead of responding to breaches after they occur, organizations need to prevent them at the source.

This is especially relevant in the context of AI. Once data is processed by an external system, it is difficult to control how it is used or where it goes.

By stopping sensitive data before it is shared, Trust-Prompt reduces the risk significantly.

This approach aligns with modern security principles such as zero trust and data minimization.

The Future of AI Security and Governance

Looking ahead, the role of AI in cybersecurity will continue to grow. Both attackers and defenders will become more sophisticated.

The challenge for organizations will be to maintain control in an increasingly complex environment.

This includes managing:

- Autonomous AI agents

- Cross platform data flows

- Evolving regulatory requirements

- New types of cyber threats

Solutions that focus on prevention, privacy, and local control will become increasingly important.

Conclusion Building Trust in the Age of AI

The intersection of AI, cybersecurity, and global risk is defining the current era. Organizations are facing challenges that require new thinking and new approaches.

Shadow AI and autonomous agents are changing how work is done, but they are also introducing new vulnerabilities.

At the same time, data breaches and cyberattacks continue to evolve, creating a landscape that is both innovative and uncertain.

Building trust in this environment requires more than just advanced technology. It requires clear governance, strong policies, and solutions that prioritize both security and privacy.

Trust-Prompt represents a step in this direction. By focusing on local control, real time protection, and compliance readiness, it provides organizations with a practical way to manage AI risks.

Those who adopt this approach early will be better positioned to navigate the challenges of the future.

FAQs

What is Shadow AI and why is it a risk?

Shadow AI refers to the use of AI tools without official approval. It is risky because sensitive data can be shared without proper controls.

What are Shadow Agents?

Shadow Agents are autonomous AI systems that operate without oversight. They can access data and perform actions on behalf of users.

How does Trust-Prompt protect data?

Trust-Prompt evaluates prompts locally before they are sent. It detects sensitive data and can block or warn users in real time.

Is Trust-Prompt compliant with GDPR and EU AI Act?

Yes. Because it does not send data to external servers and operates locally, it aligns with GDPR and EU AI Act requirements.

Why is the browser important for AI security?

Most AI interactions happen in the browser. Controlling this layer allows organizations to prevent risks before data is shared.

Can Trust-Prompt be used in large enterprises?

Yes. It can be managed centrally by IT administrators and applied across different teams and departments.