Recent statistics reveal a sharp rise in reported data breaches across Europe. In Spain alone, authorities logged nearly 2,800 personal data breach notifications in 2025, with ransomware and cyber intrusions dominating the list. At the same time, global research highlights how the widespread use of public AI tools is creating new, often invisible pathways for sensitive data to leave organisations.

Spain Records Nearly 2,800 Personal Data Breach Notifications in 2025

Ransomware attacks and external cyber intrusions accounted for the majority of incidents. Many breaches involved personal data of customers, employees or patients, triggering mandatory reporting under GDPR.

While ransomware remains the most visible threat, a significant portion of notifications stems from accidental or unauthorised data exposure — a category that is harder to detect and often under-reported.

AI and Public Tools Are Driving a New Wave of Breaches

Public AI platforms like ChatGPT, Gemini and Claude are now embedded in daily workflows. Employees frequently use these tools to summarise reports, draft emails or analyse data. However, when sensitive information is pasted into consumer-grade AI services, it can result in multiple GDPR violations at once: unauthorised international transfers, purpose limitation breaches and use of unapproved processors.

Research shows that AI-related data movement significantly expands the attack surface, especially when organisations lack visibility into what data is being shared and where. This trend is forcing privacy professionals to rethink traditional approaches. More details in this article: AI and data breaches force new approach to privacy.

Why Traditional Security Often Reacts Too Late

Most organisations rely on endpoint protection, email filters and traditional DLP solutions. These tools typically detect issues only after data has already left the environment — often hours or days later. By then, the breach has occurred, and remediation becomes expensive and complex.

The real problem occurs at the point of input: the moment an employee copies and pastes data into a public AI chat window. As AI adoption accelerates, the need for proactive, user-level controls becomes urgent. For more on this evolving challenge: Data Privacy Day 2026: AI on the rise – how to protect your data.

A Practical Prevention Layer: Local Prechecks

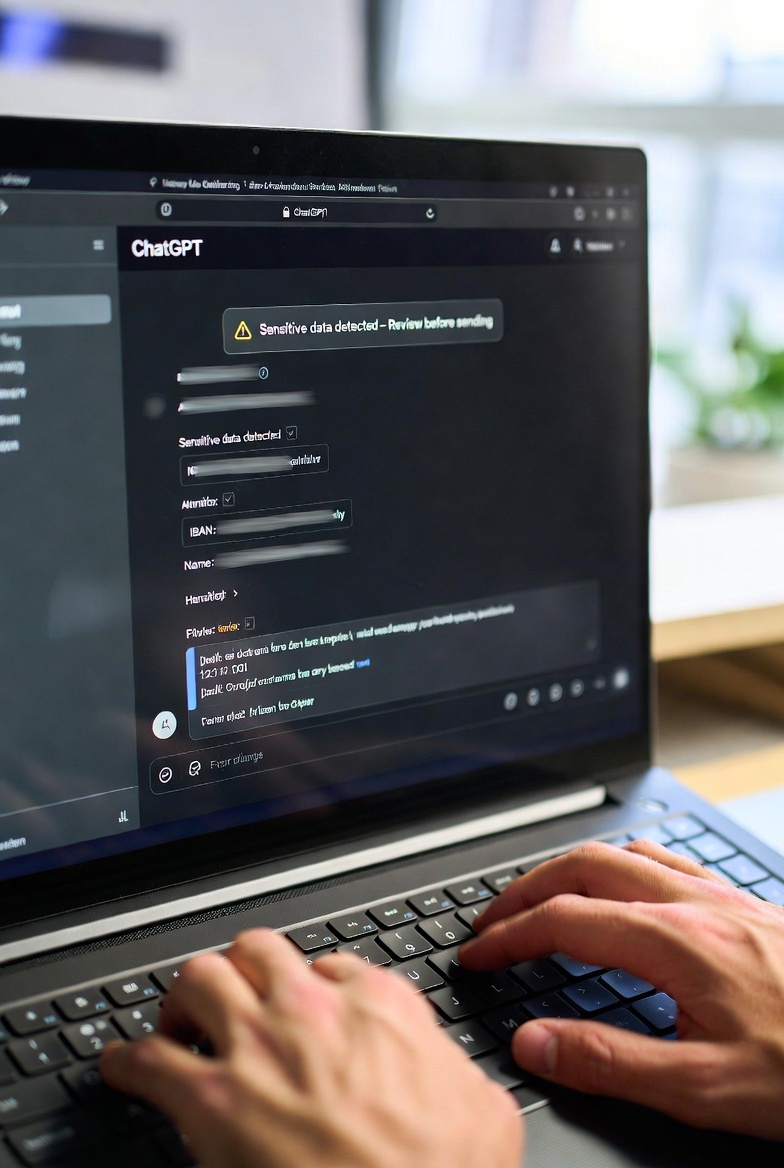

One effective way to address this risk is to introduce lightweight, client-side controls that analyse text before it reaches any AI service. Tools that run entirely in the browser can identify high-risk patterns — such as IBANs, credit card numbers, personal identifiers or internal documents — and either block the submission or alert the user in real time.

Because these checks happen locally, no data leaves the device unless the user decides it is safe. This approach supports data minimisation principles and aligns with both GDPR and the EU AI Act. That’s why Trust-Prompt was built: to give teams a simple, on-device layer that stops accidental leaks before they happen.

What This Means for Organisations in 2026

With breach notifications continuing to rise and AI usage becoming standard practice, organisations need controls that act at the earliest possible stage. Preventing accidental exposure through public tools is no longer optional — it is becoming a core part of responsible ai and data protection and governance.