We did it! We created the first privacy first Chrome extention “Trust-Prompt” or (TrustPrompt) that protects any AI user from accidental data breaches with the ever more increasing risk for the companies.

In 2026, the use of artificial intelligence tools has become routine across industries. Yet recent data shows that a large number of employees are turning to unapproved consumer AI platforms during work hours. A Microsoft UK study from October 2025 found that 71% of UK employees use AI tools that have not been officially approved by their organisations. In a team of twenty people, that means roughly fourteen are using such tools. Often without realising the potential consequences.

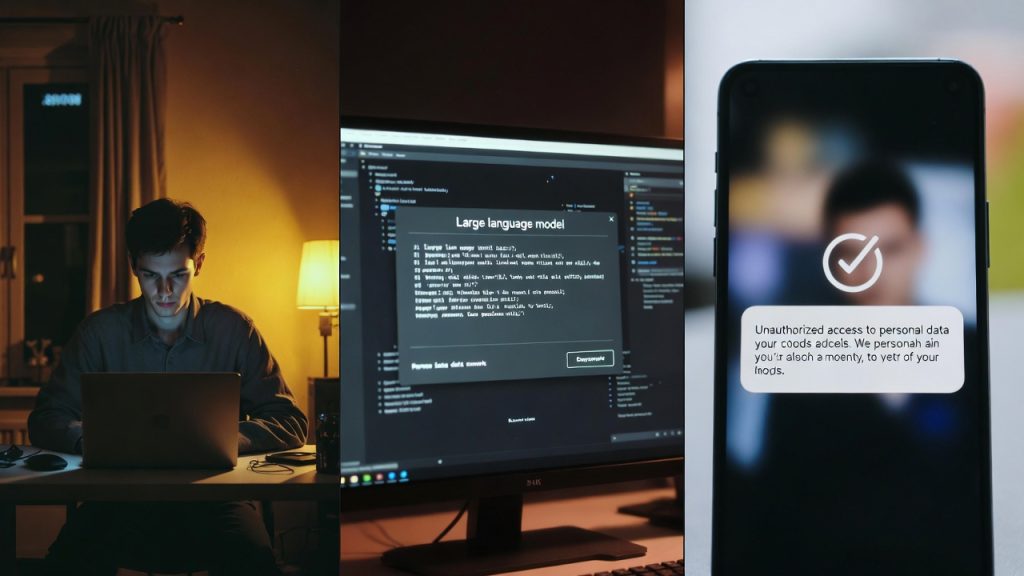

Copy & paste into LLM is becoming the main driver of Data leaks

The main problem arises when employees copy and paste sensitive information into these public AI services. According to research by Veritas Technologies, seven to eight out of ten employees admit to entering confidential data such as customer records, financial details, sales figures or intellectual property. Once submitted, the data may be stored, processed or even used for training commercial models. Creating multiple simultaneous compliance violations.

For organisations operating under GDPR, each such incident can trigger three distinct breaches:

- Unauthorised international data transfer (data often routes through servers outside the EEA without proper safeguards)

- Violation of purpose limitation (data collected for specific business purposes is repurposed by the AI provider)

- Lack of processor authorisation (consumer AI services are rarely listed as approved sub-processors)

These risks are not limited to general business environments. Industrial companies face similar challenges when engineers or analysts use public AI tools to process proprietary designs, manufacturing processes or operational data. Many legacy systems in these sectors cannot support modern AI securely, leaving employees to rely on external tools that lack enterprise-grade controls.

AI governance strategies

CIOs and IT leaders are increasingly questioning their AI governance strategies. Without proper visibility, it becomes nearly impossible to know exactly which tools are being used and what data flows through them. IBM’s 2025 Cost of Data Breach Report attributes around 20% of all breaches to shadow AI, with average remediation costs reaching £670,000 per incident — not counting reputational damage or lost business from clients discovering governance weaknesses.

The broader trend shows that privacy concerns are accelerating. A 2026 Data Privacy & Protection Report by StartUs Insights highlights that more than 6,800 startups worldwide are developing solutions in areas such as encryption, consent management, secure data storage and AI-powered risk detection. Industries including healthcare, finance and manufacturing are adopting privacy-by-design approaches at scale. Yet many organisations still struggle to translate awareness into practical controls at the user level.

One promising direction involves client-side protection mechanisms that operate directly in the user’s browser. Such tools can analyse text in real time before it is submitted to any AI service, identifying high-risk patterns like financial identifiers, personal data or secrets. Because these checks happen locally, no information leaves the device unless the user explicitly approves it. This approach respects data minimisation principles and aligns with both GDPR and the EU AI Act without requiring complex infrastructure changes.

Local blocking of data leaks can be the solution

By focusing on prevention at the moment of input, organisations can reduce the likelihood of accidental exposure while allowing employees to continue using AI tools productively. This is particularly valuable in regulated sectors where compliance teams need lightweight solutions that do not disrupt existing workflows.

Looking ahead, effective AI data protection in an AI-driven world requires a combination of measures: clear policies, regular audits, employee training and technical safeguards that work at the point of use. The most successful strategies treat privacy not as an afterthought but as a foundational layer of every technology process.

At the same time, companies must recognise that shadow AI is already widespread. The question has shifted from whether employees are using AI to whether leaders know what data is being shared and how it is protected.

For organisations seeking to strengthen their approach to AI-related data risks, exploring practical browser-level controls can be a meaningful first step.