As the digital landscape shifts into a new era of accountability, the European Union’s Artificial Intelligence (AI) Act has transitioned from a legislative framework into a rigorous operational reality. With the recent entry into force of several “preparatory obligations,” the window for voluntary experimentation is officially closing. For business leaders, this marks a fundamental pivot: AI compliance is no longer a “future problem” for the legal department, but a present-day requirement for risk governance and strategic leadership.

The latest developments in the EU AI Act confirm our long-standing view: the most successful organizations won’t be those with the most powerful models, but those with the most robust Trust-Prompt AI Governance layer.

From Innovation to Regulated Infrastructure

For years, AI has been treated as an experimental “bolt-on” capability—a tool used to gain incremental efficiencies. However, as of early 2026, the AI Act’s preparatory obligations signal that AI is now officially classified as regulated infrastructure. This shift forces organizations to move beyond simply knowing what their AI does to understanding how and why it is used.

Ian Murrin, CEO of Digiteria and a leading voice on digital transformation, emphasizes that this transition is decisive. Accountability now extends across the entire supply chain. Organizations are responsible not only for the AI they develop in-house but also for the third-party models and tools they integrate into their workflows. This is particularly critical as we approach the growing role of Shadow AI and the August 2026 deadline, when the toughest requirements for high-risk systems take full effect.

The Rising Cost of the Governance Gap

The urgency for these preparatory steps is underscored by a staggering rise in security incidents. According to latest industry reports, the Amount of data breaches in 2025 and 2026 has escalated, with cybercrime costs projected to hit $10.5 trillion globally. A significant portion of these breaches is attributed to the “Shadow AI” phenomenon—employees using unsanctioned tools like public LLMs to process sensitive corporate data.

IBM’s 2025 Cost of a Data Breach report highlighted that organizations experiencing breaches due to Shadow AI faced costs nearly $700,000 higher than their peers. This Governance gap of data breaches in 2026 illustrates a simple but urgent truth: the cost of ignoring evolving AI threats far outweighs the investment in proactive defenses. For many, the wake-up call came through high-profile incidents like the Betterment data breach or the infamous Chat-gpt data leak, proving that even industry leaders are vulnerable without a dedicated AI data breach risks for european companies strategy.

Navigating the 2026 Deadlines

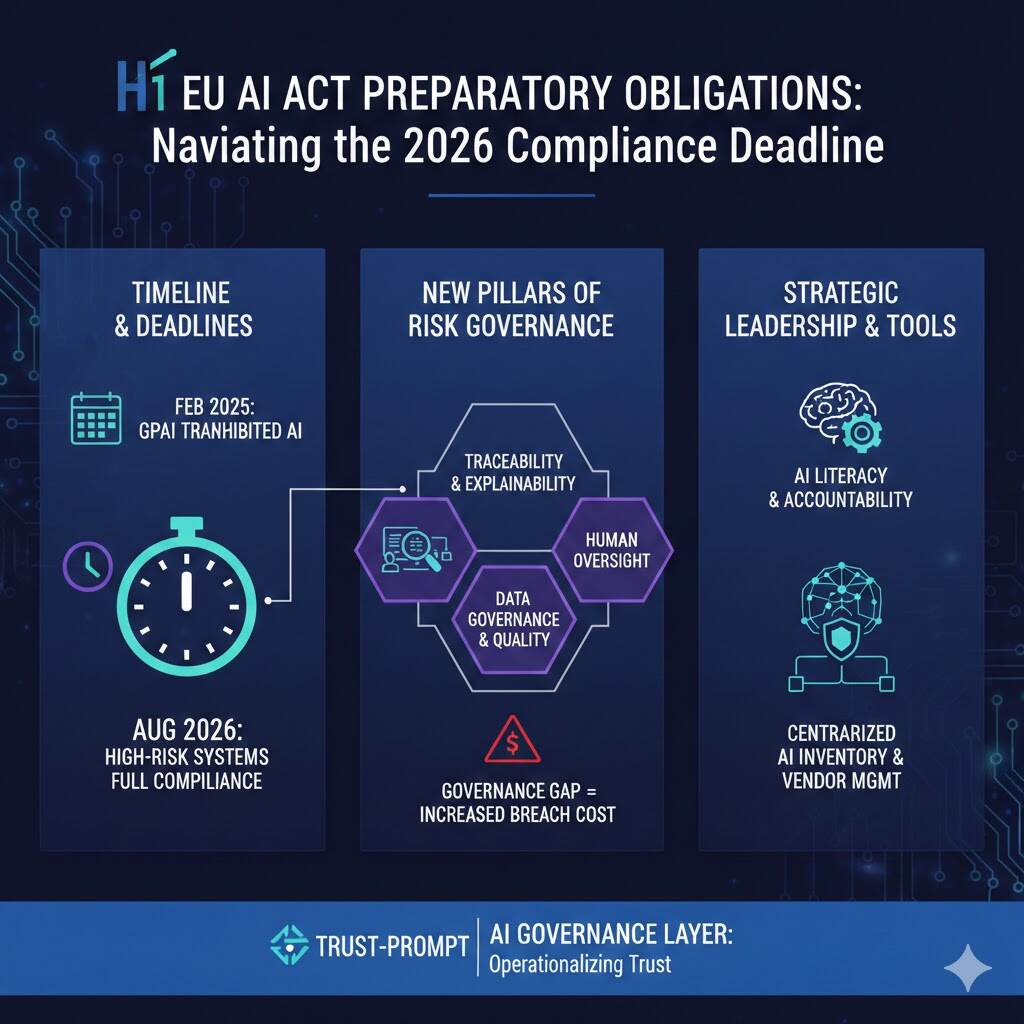

The roadmap to compliance is now clearly defined, and the milestones are non-negotiable. As of February 2025, prohibited AI practices—such as social scoring and manipulative behavioral systems—must have been phased out. By August 2025, providers of general-purpose AI (GPAI) must meet strict transparency and documentation standards.

The next major hurdle is August 2, 2026. This is the date when high-risk AI systems—those used in critical infrastructure, education, employment, and law enforcement—must fully comply with the Act’s most stringent requirements. This includes:

- Traceability and Explainability: AI-driven outcomes must be explainable in human terms and traceable across their entire lifecycle.

- Human Oversight: High-risk systems must allow for meaningful human intervention and override mechanisms.

- Data Governance: Training and testing datasets must be representative, high-quality, and free of prohibited biases.

For sectors like Fintech and iGaming, where algorithmic decision-making is core to the business, Safeguarding sensitive data is no longer just a best practice—it is a survival requirement. Even the EU Parliament disables AI’s internally when they fail to meet these burgeoning standards, setting a precedent for public and private sectors alike.

Strategic Leadership: The Human Factor

Despite the technical complexity of the Act, the deciding factor for success is leadership, not just technology. “The organizations that win will treat compliance as infrastructure,” says Murrin. This involves mapping every AI use case, setting clear internal rules, and ensuring “AI Literacy” across the workforce.

Leadership must bridge the gap between innovation teams and risk management. This is where Trust-Prompt Features come into play, providing the necessary visibility and control to manage AI interactions at scale. Understanding How Trust-Prompt works allows executives to see Why Trust-Prompt is the foundational layer for modern AI governance.

Operationalizing Trust

The shift from “policy ambition to operational reality” means that companies can no longer rely on vague ethical frameworks. They need a centralized inventory of AI systems, named accountability for AI-driven decisions, and rigorous vendor management.

As we move toward the final implementation phases of the Act, the focus is shifting toward “continuous post-market monitoring.” Compliance is not a one-time audit; it is a routine discipline. Organizations that embed these controls early—integrating them into the design process rather than retrofitting them—will be the ones to maintain their competitive edge in a regulated digital future.

2. https://digital-strategy.ec.europa.eu/en/faqs/navigating-ai-act

3. https://www.legalnodes.com/article/eu-ai-act-2026-updates-compliance-requirements-and-business-risks