The latest report from the Stanford HAI Institute delivers a clear message.

AI is advancing faster than ever.

But our ability to control it is not keeping up.

The report highlights a widening gap between:

- what AI systems can do

- how well we can manage and govern them

This gap is becoming one of the most critical challenges of 2026.

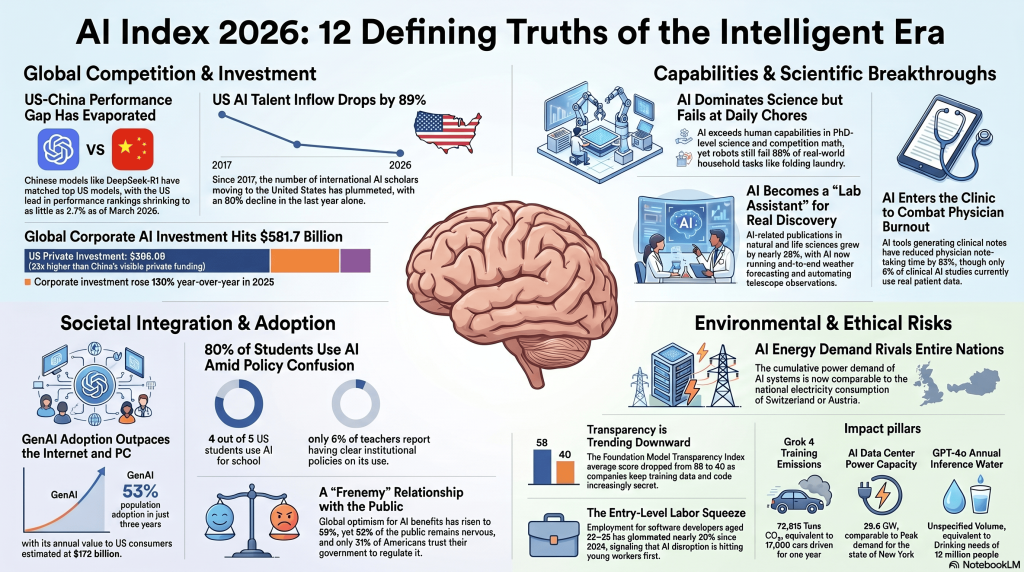

AI Is Growing Faster Than Any Technology Before

One of the most striking findings is the speed of AI adoption.

AI is scaling faster than:

- the personal computer

- the internet

Organizations are rapidly integrating AI into daily workflows.

Nearly every company is experimenting with or deploying AI systems.

At the same time:

- investment is increasing

- revenues are growing

- adoption is accelerating

This confirms that AI is no longer experimental. It is now core infrastructure.

AI Capabilities Are Accelerating Rapidly

The report shows that AI capabilities are not slowing down.

They are accelerating.

Modern AI systems can now:

- outperform humans in scientific benchmarks

- solve complex reasoning tasks

- reach near-human coding performance

In some cases, AI performance improved from 60% to nearly 100% in just one year.

This level of progress is unprecedented.

But it comes with new risks.

The “Jagged Frontier” Problem

Despite impressive capabilities, AI is not consistently reliable.

The report describes this as the “jagged frontier.”

This means:

- AI can solve highly complex problems

- but still fail at simple tasks

For example:

- AI can win math competitions

- but struggles with basic tasks like reading a clock

This inconsistency creates serious risks in real-world use.

AI Incidents Are Rising Rapidly

One of the most important findings is the increase in AI-related incidents.

The number of documented incidents has grown significantly.

From:

- 233 incidents in 2024

- to 362 incidents in 2025

This shows a clear trend. As AI adoption increases, so do failures and risks.

The Governance Gap of Data Breaches in 2026

This leads to one of the most critical insights.

There is a Governance gap of data breaches in 2026.

AI systems are being deployed faster than:

- governance frameworks

- security controls

- compliance structures

Organizations are adopting AI.

But they are not controlling it properly.

This creates:

- data leaks

- compliance risks

- security vulnerabilities

The gap is no longer theoretical.

It is already visible in real-world incidents.

Transparency Is Decreasing While Risk Is Increasing

Another important trend is the decline in transparency.

More than 90% of advanced AI models are now developed by private companies.

This creates several challenges:

- less visibility into how models work

- limited access to training data

- reduced accountability

As systems become more powerful, they also become more opaque.

This increases risk for:

- companies

- regulators

- users

AI Is Already Disrupting the Workforce

The impact on jobs is no longer theoretical.

It is already happening.

The report shows that:

- entry-level roles are being affected first

- younger workers are most impacted

AI is replacing tasks that were traditionally used for learning and training.

This creates a new challenge: The loss of the apprenticeship model

At the same time, expectations for workers are increasing.

They must now:

- use AI tools

- validate outputs

- take responsibility for results

Public Trust and Expert Views Are Diverging

The report also highlights a growing divide.

AI experts are optimistic.

The public is not.

For example:

- 73% of experts believe AI has a positive impact on jobs

- only 23% of the public agrees

This trust gap is critical.

Because adoption depends on confidence.

Without trust, AI cannot scale sustainably.

Environmental Impact Is Becoming a Major Concern

AI is not only a digital problem.

It is also an environmental one.

Training advanced models now requires massive resources.

For example:

- a single model can produce tens of thousands of tons of CO2 emissions

This raises important questions:

- Is AI sustainable?

- Who bears the cost?

As AI grows, these concerns will become more important.

Why Current Security Approaches Are Failing

The report makes one thing clear.

We are not prepared.

Current approaches focus on:

- policies

- guidelines

- post-incident responses

But they miss the most critical point:

👉 where data is created and shared

This is why many organizations remain vulnerable.

Even with strong cybersecurity frameworks.

The Missing Layer: Control at the Prompt Level

One of the biggest blind spots is the prompt itself.

Every AI interaction starts with a prompt.

This is where:

- sensitive data is entered

- decisions are made

- risks are created

Yet most systems:

- do not monitor prompts

- do not control inputs

- do not prevent exposure

This is a fundamental design flaw.

How Trust-Prompt Addresses This Gap

This is where Trust-Prompt becomes relevant.

Trust-Prompt is designed as an AI governance layer.

It operates at the most critical moment before data is sent

Trust-Prompt:

- analyzes prompts locally

- detects sensitive information

- blocks or warns users in real time

This approach directly addresses the governance gap.

Instead of reacting to breaches, it prevents them.

Why Local Processing Is Critical

The key principle is simple. The safest data is the data that never leaves the device

Once data is sent:

- it can be stored

- copied

- leaked

Local processing eliminates this risk.

This aligns with:

- GDPR principles

- EU AI Act requirements

- modern cybersecurity strategies

The Role of Trust Prompt Plus

For more advanced use cases, Trust Prompt Plus provides additional capabilities.

It enables:

- enhanced detection

- more control options

- improved usability

This is especially relevant for:

- professionals

- organizations

- compliance-driven environments

As AI adoption grows, such tools become essential.

AI Governance Is Becoming a Competitive Advantage

The report suggests a shift.

AI is no longer just about capability.

It is about governance.

Organizations that manage AI effectively will:

- reduce risk

- build trust

- gain competitive advantage

This aligns with broader trends seen across the News landscape.

AI is moving from innovation to infrastructure.

Lessons from Other Data Incidents

The governance gap is not theoretical.

It is already visible in real-world cases such as:

- Europol Dismantles major data leak

- EU Commission data theft incident

- Alloha Fibra Cyberattack by Gentlemen

These incidents show data exposure often happens due to weak control

Not just hacking.

AI Security Risks Are Increasing Rapidly

The findings also connect to broader discussions like AI Security Risks anthropic Mythos explained

AI systems introduce new types of risks:

- unpredictable behavior

- misuse

- lack of accountability

These risks require new solutions.

Traditional security models are not enough.

The Future of AI: Control or Chaos

The Stanford AI Index 2026 makes one thing clear.

We are at a turning point.

AI is becoming:

- more powerful

- more integrated

- more impactful

But without proper governance, risks will increase.

The key question is if we can control AI as fast as we develop it!

Final Thoughts

The AI revolution is not slowing down.

But governance is falling behind.

This creates a dangerous imbalance.

Organizations must act now.

They need:

- better visibility

- stronger controls

- proactive solutions

Because the future of AI will not be defined by capability alone.

It will be defined by how well we manage it.