In the rapidly evolving digital landscape, artificial intelligence has become an indispensable tool for businesses across Europe. From automating routine tasks to generating creative content, AI promises unprecedented productivity gains. However, a silent storm is brewing within many organizations: Shadow AI. This refers to the unauthorized use of AI tools by employees, bypassing official IT channels and oversight. Once considered a minor annoyance, Shadow AI has now escalated into a critical governance and compliance crisis, especially as Europe hurtles towards the August 2026 enforcement of the groundbreaking EU AI Act.

This article will look into the current state of Shadow AI in Europe, the regulatory pressures, emerging threats, and pragmatic strategies to bring this hidden problem and risks for European companies into the light.

What is Shadow AI and Why is it Surging in Europe?

Shadow AI occurs when employees adopt AI tools—be it large language models (LLMs), AI art generators, or specialized AI assistants—without their organization’s official sanction or vetting. This phenomenon is often driven by a genuine desire for efficiency and innovation. Employees, eager to meet demanding productivity targets, frequently turn to readily available, powerful, and often free AI tools to streamline their workflows.

While the intention might be good, the implications for businesses are severe. The sheer accessibility of AI, coupled with a lack of clear internal policies or approved alternatives, has fueled its proliferation in the European workplace. Studies indicate that while over 80% of Fortune 500 companies actively use AI, a staggering 30% of employees in Europe are resorting to unapproved tools to maintain their competitive edge. This creates a vast, unmonitored landscape of data processing and decision-making, far outside the visibility of IT and compliance teams.

The EU AI Act: Shadow AI’s Unforgiving Deadline

The most significant driver intensifying the Shadow AI crisis in Europe is the impending August 2, 2026, enforcement deadline for the EU AI Act. This landmark legislation aims to regulate AI systems based on their potential to cause harm, categorizing them into unacceptable, high-risk, limited-risk, and minimal-risk categories.

The critical link to Shadow AI: Unmanaged Shadow AI usage is now directly categorized as a high-risk compliance failure.

Imagine a scenario where an employee uses an unvetted, consumer-grade AI tool to assist in sensitive processes like:

- Recruitment: Screening CVs or drafting job descriptions, potentially introducing biases.

- Credit Scoring: Aiding in financial assessments, impacting individuals’ access to credit.

- Medical Diagnosis Support: Utilizing AI for preliminary analysis without proper validation.

If these “high-risk” applications are being powered by unauthorized AI, organizations face severe penalties. The EU AI Act stipulates fines of up to €35 million or 7% of global annual revenue for non-compliance. This makes the invisible use of AI a tangible, existential threat to European businesses. The previously mentioned ChatGPT Data Leak incident served as an early warning of the kind of data exposure possible with unregulated AI use.

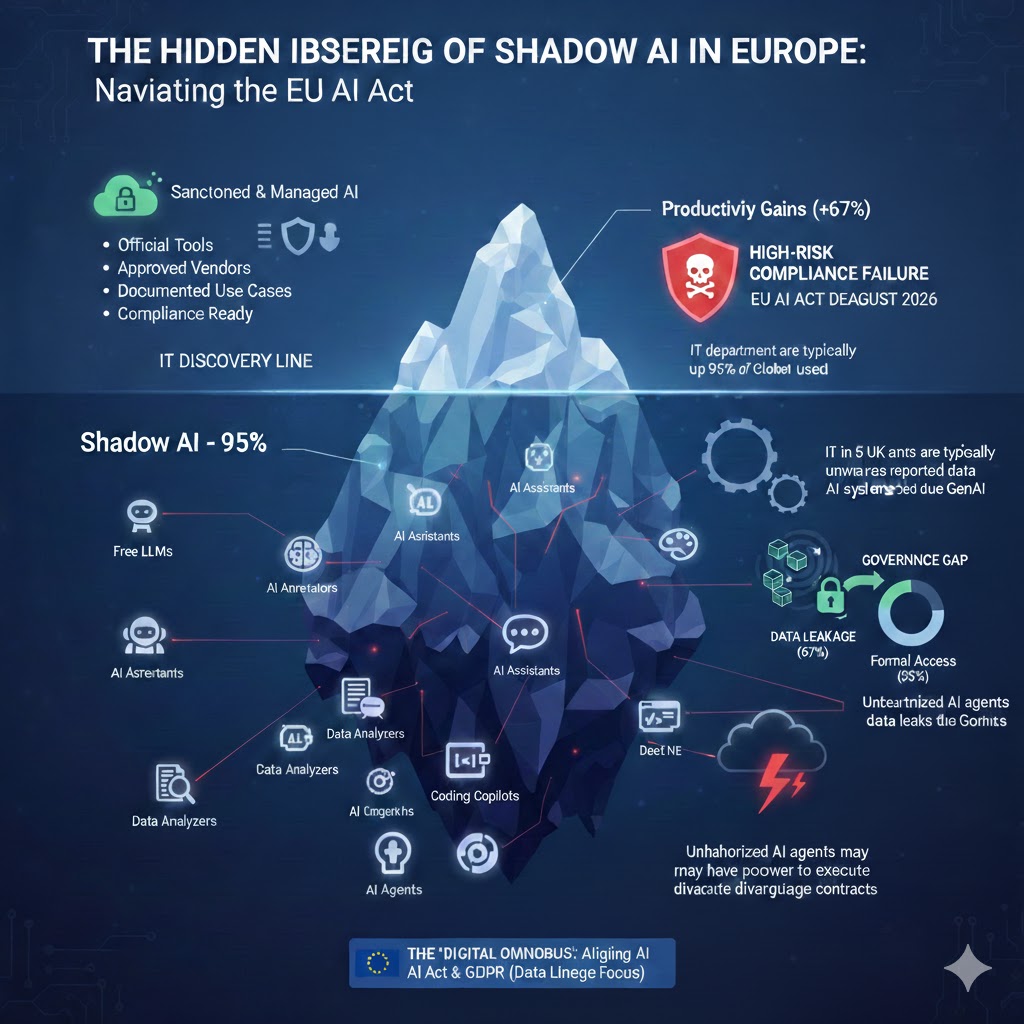

Infographic: The Hidden Iceberg of Shadow AI

Top of the Iceberg (Above Water – Visible/Sanctioned AI):

- Represent a small portion (e.g., 5-10%) of the iceberg.

- Label it “Sanctioned & Managed AI.”

- Bullet points: “Official Tools,” “Approved Vendors,” “Documented Use Cases,” “Compliance Ready.”

- Include a small padlock icon or shield to denote security.

- Add an upward-trending graph labeled “Productivity Gains (+67%)” to show the benefit of managed AI.

Water Line: Label this “IT Discovery Line.”

Bottom of the Iceberg (Below Water – Shadow AI):

- Represent the vast majority (e.g., 90-95%) of the iceberg.

- Label this section “Shadow AI – 95%.”

- Show various generic icons/labels for unauthorized AI tools floating beneath the surface: “Free LLMs,” “AI Assistants,” “AI Generators,” “Data Analyzers,” “Coding Copilots,” “AI Agents.” These should be numerous and scattered.

Threats/Risks Emanating from Shadow AI (Connected by lines to the submerged part):

- High-Risk Compliance Failure: A large shield icon with a skull and crossbones. Text: “EU AI Act Deadline: August 2026,” “Fines: Up to €35M / 7% Global Revenue.”

- Governance Gap: Two gears, one significantly larger than the other, representing imbalance. Text: “IT departments typically unaware of up to 95% of AI systems being used.”

- Data Leakage: A cloud with data escaping. Text: “1 in 5 UK and EU companies reported data leaks due to GenAI.”

- New Threats: Memory Poisoning & Agentic AI: A storm cloud with a lightning bolt. Text: “Unauthorized AI agents may have power to execute disadvantageous contracts.”

EU Policy Context (Bottom of the infographic):

- A banner or box labeled “The ‘Digital Omnibus’: Aligning AI Act & GDPR (Data Lineage Focus)” with a small EU flag icon.)

The Risks for European Companies Beyond Fines

While financial penalties are a major concern, the dangers of Shadow AI extend far beyond regulatory fines:

- Data Leakage and Security Breaches: Employees often input sensitive company data, intellectual property, or personally identifiable information (PII) into public AI models that lack enterprise-grade security. This poses a significant risk of data exposure, with reports indicating that 1 in 5 UK and EU companies have experienced data leaks due to generative AI in the past year.

- Bias and Inaccuracy: Unvetted AI tools can produce biased or inaccurate outputs, leading to flawed decision-making, reputational damage, and legal challenges, especially in areas like HR or customer service.

- Intellectual Property Loss: Proprietary algorithms, product designs, or strategic documents fed into external AI systems can become part of the training data for those systems, effectively becoming public or accessible to competitors.

- Emerging Threats: Memory Poisoning and Agentic AI: The threat landscape is constantly evolving. A recent development, Memory Poisoning, involves attackers manipulating the “memory” of AI assistants. Since shadow tools lack robust security, they are vulnerable to being “poisoned” to generate biased or fraudulent outputs. Furthermore, the rise of “Agentic AI”—AI that can perform actions like booking or signing—means unauthorized agents could potentially execute disadvantageous contracts or take harmful actions on behalf of the company.

From Banning to Betterment: A New Approach to AI Governance

After the Data Breach of Betterment, Financial services recognizing the futility of outright banning AI (which often drives usage underground), European organizations are pivoting towards a strategy of “responsible enablement” and Sovereign AI.

This shift involves:

- AI Discovery Platforms: Implementing tools that can actively detect and monitor AI usage across the corporate network. This provides IT and compliance teams with crucial visibility into the “shadow” realm.

- Establishing Clear Policies: Developing clear, concise, and accessible policies on acceptable AI use, outlining approved tools, data handling guidelines, and consequences for non-compliance.

- Providing Sanctioned Alternatives: Instead of just saying “no,” organizations are offering approved, GDPR-compliant AI tools and environments. This might include:

- European-hosted LLMs: Leveraging services from providers like Mistral AI or OVHcloud that ensure data residency and adherence to EU regulations.

- Internal AI Sandboxes: Creating secure, internal environments where employees can experiment with AI tools using dummy data or less sensitive information.

- Employee Training and Awareness: Educating employees on the risks of Shadow AI, the importance of data privacy, and how to use approved tools effectively and ethically. This cultivates an “AI-aware” culture.

- Data Lineage and Governance: Focusing on understanding where data originates, how it is processed by AI, and where it ultimately resides. This is particularly relevant with the European Commission’s discussions around a “Digital Omnibus” package to align the AI Act with GDPR, simplifying data lineage tracking for organizations.

Table: Key Pillars of Effective AI Governance in Europe

| Pillar | Description | Trust-Prompt.com Solution Focus |

| Visibility | Detecting and monitoring all AI usage (sanctioned & shadow). | AI Discovery & Audit Tools |

| Policy | Clear, enforceable guidelines for responsible AI use. | Policy Framework Development |

| Enablement | Providing secure, compliant, and approved AI alternatives. | Secure AI Sandboxes & Tool Vetting |

| Education | Training employees on AI risks, ethics, and best practices. | AI Awareness & Training Modules |

| Compliance | Ensuring adherence to the EU AI Act, GDPR, and other regulations. | Risk Assessment & Remediation |

Safeguarding Sensitive Data in the Age of AI

The core of combating Shadow AI lies in protecting your organization’s most valuable asset: its data. Here are actionable steps to fortify your data against the risks posed by unauthorized AI:

- Implement Data Loss Prevention (DLP) for AI: Deploy DLP solutions that can identify and block sensitive data from being copied or pasted into unapproved AI applications.

- Adopt Secure Enterprise-Grade LLMs: Prioritize AI solutions designed for enterprise use, offering robust security, data privacy assurances, and audit trails. Consider private LLM deployments or models specifically designed to keep your data isolated.

- Regular Audits and Assessments: Conduct periodic audits of AI usage and perform risk assessments on new AI tools before they are introduced into the workflow.

- Encryption and Anonymization: Where possible, ensure data is encrypted both in transit and at rest, and explore anonymization techniques when using AI for analysis to reduce the risk of re-identification.

External Resources for Further Reading:

- ENISA – Cybersecurity of AI: The European Union Agency for Cybersecurity offers valuable insights and reports on AI security risks. Explore their publications on ENISA’s AI Security page.

- Gartner Report on Shadow AI: For industry research and trends, look for recent reports from Gartner on AI governance and shadow IT (e.g., Gartner insights on AI governance).

Conclusion

The rise of Shadow AI presents a formidable challenge for European businesses, intensified by the fast-approaching EU AI Act deadline. However, by understanding the risks, shifting from prohibitive bans to proactive enablement, and investing in robust AI governance frameworks, organizations can transform this challenge into an opportunity for secure and compliant innovation. At Trust-Prompt.com, we are committed to helping your business navigate this complex landscape, ensuring your AI journey is both productive and compliant.